Search engine results pages (SERPs) are the battleground where visibility, traffic, and revenue are won or lost. For SEO and PPC professionals, collecting SERP data at scale is not optional—it is foundational. Whether you are analyzing ranking trends, tracking competitors, monitoring ad placements, or extracting featured snippet opportunities, scalable SERP data collection enables smarter, faster, and more defensible decisions.

TLDR: Collecting SERP data at scale allows SEO and PPC teams to monitor rankings, analyze competitors, track SERP features, and optimize ad spend effectively. The most reliable approach combines compliant data sources, robust infrastructure, intelligent parsing, and automation. Businesses should prioritize accuracy, scalability, and legal considerations when building or selecting SERP data solutions. Done correctly, large-scale SERP tracking becomes a competitive advantage rather than a technical burden.

Why SERP Data Matters in SEO and PPC

SERP data is more than keyword rankings. Modern search results include paid ads, featured snippets, local packs, shopping results, videos, AI-generated summaries, and more. Each of these elements affects visibility and click-through rates.

For SEO teams, SERP data helps:

- Track keyword rankings across locations and devices

- Identify featured snippet and rich result opportunities

- Monitor algorithm update impacts

- Analyze competitor visibility shifts

- Measure share of voice

For PPC teams, SERP data enables:

- Monitoring ad placements and impression share

- Analyzing competitor ad copy

- Tracking changes in paid search density

- Estimating cost pressure trends

- Auditing branded search terms for defense

When these insights are scaled across thousands—or millions—of keywords, they reveal structural opportunities and risks that manual tracking could never uncover.

The Core Challenges of Collecting SERP Data at Scale

Scaling SERP data collection is complex. Search engines are designed to prevent automated scraping, and results are highly dynamic, personalized, and location-sensitive.

Key challenges include:

- IP blocking and rate limiting

- CAPTCHAs and bot detection systems

- Personalized search variation

- Localization and geo-rendering differences

- Device-specific results

- Frequent SERP layout changes

Additionally, data collection must comply with legal and platform-specific policies. Enterprises must balance aggressiveness with responsibility to avoid operational disruption.

Approaches to Collecting SERP Data

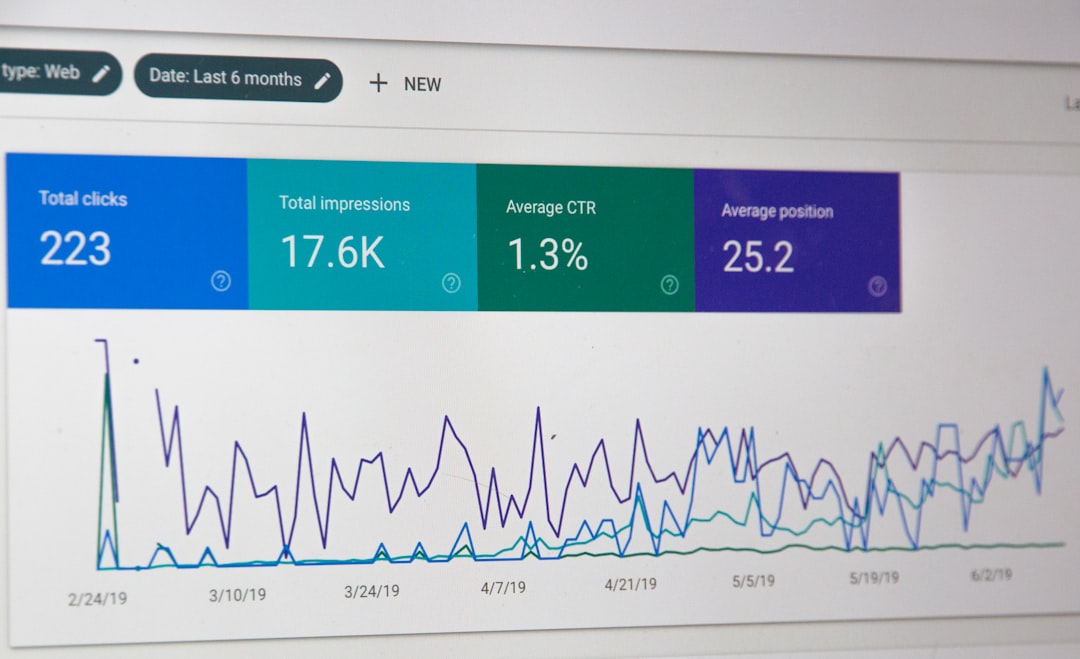

1. Using Official APIs

Some search platforms provide official APIs for limited datasets, such as search console data or advertising performance metrics. These are compliant and stable but often lack full visibility into competitor data and full-page SERP composition.

Advantages:

- High reliability

- Compliance with platform policies

- Structured datasets

Limitations:

- Restricted access

- Limited competitor intelligence

- Not a full representation of live SERPs

2. Third-Party SERP Data Providers

Many SEO platforms aggregate SERP data and provide it via APIs. This option allows companies to focus on analysis rather than infrastructure.

Advantages:

- Scalable immediately

- Maintenance handled externally

- Global tracking capabilities

Limitations:

- Cost at large scale

- Data freshness may vary

- Dependency on vendor reliability

3. Building an In-House SERP Collection System

Large enterprises or data-centric organizations often build their own systems. This approach offers maximum control but requires robust engineering capabilities.

Core components include:

- Distributed request infrastructure

- Rotating residential or data center proxies

- Browser emulation or headless environments

- SERP HTML parsing engine

- Database storage and indexing

- Monitoring and retry logic

Infrastructure Considerations for Scale

When collecting millions of keyword results per month, infrastructure design becomes critical.

Proxy Management

To avoid blocking, distributed IP networks are essential. Systems rotate IP addresses, vary request timing, and simulate realistic browsing behavior.

Effective proxy strategy should include:

- Geographically targeted IP pools

- Residential and mobile IP mixes

- Automatic failure detection and rotation

Location and Device Simulation

SERPs differ dramatically by:

- Country

- City

- Language

- Device type

An enterprise solution must replicate real-world user conditions. That includes mobile user agents, viewport sizes, and localized parameters.

Scalable Storage Architecture

Each SERP may contain dozens of structured elements: organic listings, ads, images, local results, FAQs, video carousels, and AI summaries. Storing raw HTML plus parsed structured fields allows reprocessing when SERP layouts change.

Best practice:

- Store raw HTML snapshots

- Extract normalized structured fields

- Index by keyword, date, location, and device

- Maintain historical archives for trend analysis

SERP Parsing and Data Structuring

Collecting HTML is only the first step. The real value lies in structured extraction.

Parsing should identify:

- Organic rankings and URLs

- Paid ads (top and bottom)

- Featured snippets

- People also ask boxes

- Local map pack results

- Product listings and shopping ads

- Video carousels

Because search layouts change frequently, parsing systems should be modular and versioned. Machine learning-based pattern detection can help adapt to layout changes faster than rigid XPath rules alone.

Automation and Scheduling

Large-scale collection requires disciplined scheduling.

Consider dividing keywords by:

- Priority tier: money terms vs. informational

- Volatility: highly competitive sectors require more frequent checks

- Geographic scope: national vs. local

High-value PPC keywords may require daily or hourly tracking. Long-tail SEO keywords may be monitored weekly.

Queue-based job orchestration systems can distribute requests responsibly while avoiding traffic spikes that trigger defenses.

Compliance, Governance, and Risk Management

Organizations must carefully assess:

- Terms of service implications

- Regional data processing laws

- Internal risk tolerance

- Ethical scraping boundaries

Legal counsel should review high-scale scraping initiatives. Additionally, rate controls and responsible engineering practices reduce both operational and reputational risk.

Turning SERP Data into Strategic Value

Raw data is only useful if translated into insight.

SEO Use Cases

- Measuring true visibility beyond rank position

- Detecting SERP feature expansion trends

- Gap analysis versus major competitors

- Identifying cannibalization across domains

- Monitoring recovery after algorithm updates

PPC Use Cases

- Competitive ad intelligence analysis

- Monitoring brand bidding competitors

- Tracking changes in top-of-page density

- Identifying emerging advertisers

- Testing ad copy differentiation strategies

The most advanced teams integrate SERP data into:

- Business intelligence platforms

- Forecasting models

- Attribution systems

- Budget allocation algorithms

Performance Monitoring and Quality Control

At scale, data reliability is easily compromised. Implement checks such as:

- Validation against known rankings

- Random HTML audits

- Error rate monitoring dashboards

- Detection of abnormal ranking volatility spikes

Automated anomaly detection can flag scraping failures before they distort reporting decisions.

Future-Proofing SERP Data Collection

Search engines increasingly integrate AI-generated answers, zero-click experiences, and interactive widgets. This reduces traditional organic CTR and introduces new data dimensions.

Forward-looking systems should:

- Capture AI summary presence indicators

- Monitor answer box prevalence

- Track engagement-related signals indirectly

- Adapt parser logic rapidly when layouts shift

Organizations that treat SERP data collection as an evolving engineering discipline—rather than a static scraping task—maintain strategic resilience.

Conclusion

Collecting SERP data at scale is a serious operational undertaking that requires technical sophistication, infrastructure investment, and compliance awareness. However, the payoff is significant: superior competitive intelligence, stronger forecasting accuracy, improved SEO visibility, and more efficient PPC spend.

Whether leveraging third-party providers or building internal systems, the guiding principles remain consistent: prioritize accuracy, engineer for scale, protect compliance, and focus relentlessly on translating data into strategic decision-making power. In highly competitive digital markets, those who understand the SERP—at scale—control the narrative of visibility.