Evaluating computer performance has become essential in an era where users rely on their systems for everything from gaming and video editing to software development and scientific research. PC benchmarking platforms like Geekbench provide structured, repeatable methods to measure and compare hardware performance across devices and operating systems. These tools have evolved from simple synthetic tests into comprehensive benchmarking ecosystems that inform purchasing decisions, enterprise deployments, and system optimizations.

TLDR: PC benchmarking platforms such as Geekbench provide standardized methods for measuring CPU, GPU, and overall system performance. They enable fair comparisons across devices, operating systems, and hardware generations. While synthetic benchmarks cannot perfectly replicate real-world usage, they offer consistent, repeatable metrics that are invaluable for consumers and professionals alike. Choosing the right benchmarking tool depends on the intended use, whether gaming, content creation, or enterprise workload analysis.

Understanding PC Benchmarking Platforms

At their core, benchmarking platforms are designed to quantify performance under controlled conditions. They simulate specific workloads and measure how quickly and efficiently a system completes them. The resulting scores offer a simplified representation of computational power, typically broken down by categories such as:

- Single-core performance

- Multi-core performance

- GPU compute capability

- Memory bandwidth and latency

- Storage throughput

Geekbench, one of the most widely recognized platforms, has gained credibility by offering cross-platform compatibility. This means users can compare Windows PCs, macOS machines, Linux systems, and even mobile devices under a unified scoring methodology.

Why Geekbench Stands Out

Geekbench’s appeal lies in its consistent methodology and cross-device comparability. Unlike some benchmarking tools that focus exclusively on gaming or graphics performance, Geekbench evaluates general processing capabilities using real-world-inspired tests such as encryption, image processing, physics simulations, and machine learning workloads.

Its scoring model breaks down performance into:

- Single-Core Score: Reflects performance in tasks that rely primarily on a single thread, important for general responsiveness and many legacy applications.

- Multi-Core Score: Demonstrates the system’s ability to handle parallel computations, essential for video rendering, compression, and multitasking.

- Compute Score: Measures GPU or specialized processor performance for tasks such as image processing and machine learning inference.

This structured scoring makes it easier for professionals, IT managers, and enthusiasts to benchmark systems in a repeatable and defensible way.

Other Notable Benchmarking Platforms

While Geekbench is a major player, it is not the only tool available. A balanced benchmarking strategy often involves using multiple platforms to gain a comprehensive understanding of system capabilities.

1. Cinebench

Cinebench focuses heavily on CPU rendering performance using a real 3D engine workload. It is particularly valued by creative professionals working in 3D modeling and animation.

2. 3DMark

3DMark is widely used for evaluating graphics performance, especially for gaming PCs. It provides visually intensive benchmark scenarios that test GPUs under realistic gaming conditions.

3. PassMark PerformanceTest

PassMark offers a broad suite of tests covering CPU, GPU, memory, and disk performance. It is frequently used in enterprise environments due to its detailed reporting capabilities.

4. AIDA64

AIDA64 includes benchmarking tools alongside system diagnostics, making it useful not only for performance testing but also for hardware monitoring and troubleshooting.

How Benchmarking Works in Practice

Benchmarking platforms operate by running predefined workloads and measuring task completion time or operations per second. To ensure reliability, these workloads are:

- Standardized: The same operations are performed on every tested system.

- Reproducible: Tests can be run multiple times under consistent conditions.

- Isolated: External variables are minimized to reduce background interference.

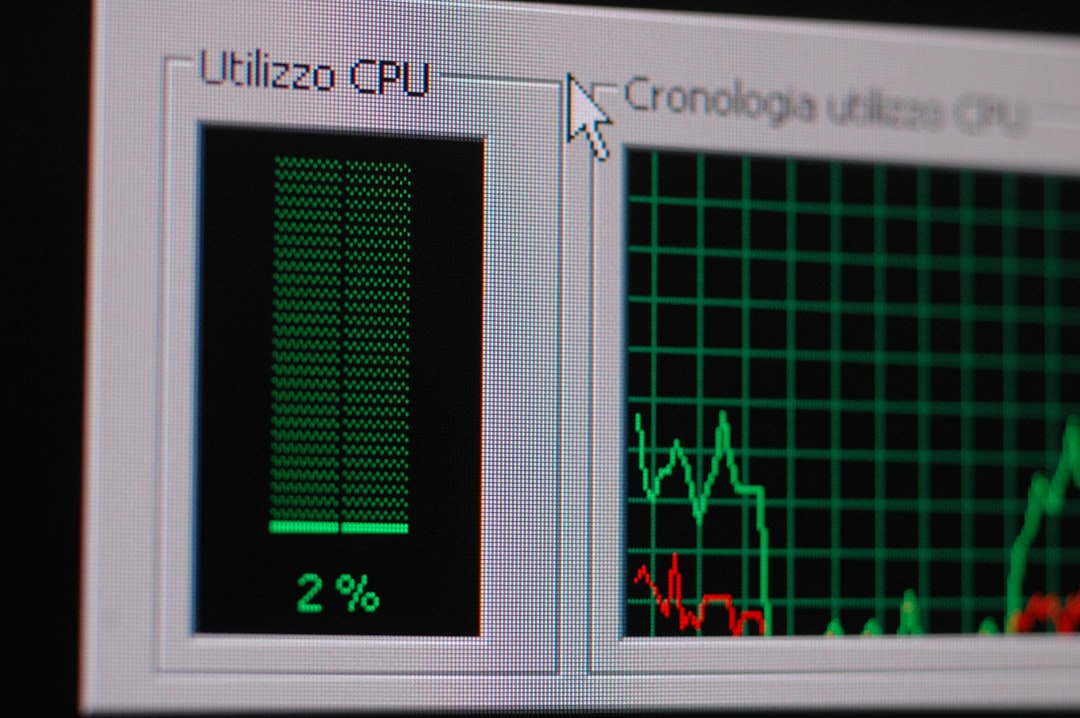

For instance, a CPU encryption test might measure how quickly the processor can encrypt a fixed dataset using a specific algorithm. A memory bandwidth test may analyze how efficiently data moves between RAM and CPU under load. These microbenchmarks collectively form the final performance score.

Single-Core vs Multi-Core Performance

One of the most significant distinctions in modern benchmarking is between single-core and multi-core performance.

Single-core performance remains important because many applications, especially older software, are not fully optimized for multiple cores. A system with high single-thread performance will often feel more responsive during everyday tasks such as browsing or document editing.

Multi-core performance, on the other hand, is critical for demanding professional workloads. Tasks like 4K video rendering, scientific analysis, software compilation, and virtualization thrive on additional cores.

Geekbench’s separation of these scores provides clarity, preventing users from relying solely on overall averages that might obscure specific strengths or weaknesses.

Interpreting Benchmark Results Responsibly

While benchmarking scores are useful, they must be interpreted with caution. Several factors can influence results:

- Thermal throttling due to inadequate cooling

- Power management settings

- Background applications

- Operating system updates

- Driver versions

Additionally, synthetic benchmarks do not always perfectly replicate real-world scenarios. A system optimized for gaming may perform exceptionally in 3DMark yet show only average results in productivity-focused tools. Therefore, performance evaluation should align with intended workloads.

The Role of Benchmark Databases

One of Geekbench’s strengths is its publicly searchable database. Users can compare their scores against thousands of similarly configured systems worldwide. This facilitates:

- Hardware validation: Verifying whether a system performs as expected.

- Upgrade planning: Evaluating potential gains from CPU or GPU replacements.

- Procurement decisions: Comparing model generations before purchase.

For enterprise environments, such objective datasets can support procurement documentation and performance auditing processes.

Use Cases Across Different Audiences

Consumers

Home users frequently rely on Geekbench scores when comparing laptops or desktops. Simplified performance rankings allow buyers to evaluate devices beyond marketing claims.

IT Professionals

In corporate environments, benchmarking ensures that newly deployed systems meet performance expectations. Regression testing after updates can help detect anomalies early.

Developers

Software developers use benchmarking tools to validate performance optimizations and test applications across hardware ecosystems.

Hardware Reviewers

Independent reviewers integrate benchmarking into their standardized testing pipelines to ensure fairness and comparability between products.

Limitations of Synthetic Benchmarks

No benchmarking tool is without limitations. Synthetic tests, by nature, abstract complex tasks into controlled routines. Real-world usage can vary significantly depending on:

- Application optimization

- Driver support

- Operating system efficiency

- Thermal design and sustained load handling

A laptop, for instance, may achieve high benchmark results in short bursts but struggle to maintain sustained performance under prolonged heavy workloads due to heat constraints.

Therefore, experts recommend combining benchmark data with real-world application tests whenever possible.

Emerging Trends in Benchmarking

As computing evolves, so do benchmarking methodologies. Increasingly, platforms are integrating:

- AI and machine learning workloads

- Battery efficiency measurements

- Cross architecture comparisons between x86 and ARM

- Cloud compute validation

These advancements reflect the changing landscape of personal and professional computing. With ARM-based processors gaining adoption and AI acceleration becoming mainstream, benchmarking must adapt to remain relevant and meaningful.

Best Practices for Reliable Benchmarking

To ensure trustworthy results, professionals typically follow these guidelines:

- Run tests multiple times and calculate the average score.

- Close background applications before starting benchmarks.

- Ensure drivers and operating systems are up to date.

- Maintain adequate cooling and thermal stability.

- Document system specifications for transparency.

Following these steps reduces variability and increases confidence in the findings.

Conclusion

PC benchmarking platforms like Geekbench play a crucial role in today’s technology ecosystem. They transform complex hardware performance characteristics into standardized metrics that can be compared across devices, operating systems, and generations. While no synthetic benchmark perfectly captures every real-world scenario, these tools provide a rational foundation for purchasing decisions, enterprise planning, and performance optimization.

When used responsibly and in conjunction with practical testing, benchmarking platforms offer a powerful, objective view into the computational capabilities of modern systems. In an increasingly performance-driven digital landscape, such clarity is not merely convenient—it is essential.